选择最佳时期数来训练 Keras 中的神经网络

在样本数据上训练神经网络的关键问题之一是过度拟合。当用于训练神经网络模型的 epoch 数量超过必要时,训练模型会在很大程度上学习特定于样本数据的模式。这使得模型无法在新数据集上表现良好。该模型在训练集(样本数据)上提供了很高的准确度,但在测试集上未能达到良好的准确度。换句话说,模型通过过度拟合训练数据而失去了泛化能力。

为了减轻过度拟合并增加神经网络的泛化能力,应该训练模型以获得最佳时期数。一部分训练数据专门用于模型的验证,在每个训练阶段后检查模型的性能。监控训练集和验证集的损失和准确性,以查看模型开始过度拟合的时期数。

keras.callbacks.callbacks.EarlyStopping()

任何一个损失/准确度值都可以通过提前停止回调函数来监控。如果正在监控损失,则当观察到损失值增加时,训练就会停止。或者,如果正在监控准确性,则在观察到准确性值下降时训练会停止。

Syntax with default values:

keras.callbacks.callbacks.EarlyStopping(monitor=’val_loss’, min_delta=0, patience=0, verbose=0, mode=’auto’, baseline=None, restore_best_weights=False)

Understanding few important arguments:

- monitor: The value to be monitored by the function should be assigned. It can be validation loss or validation accuracy.

- mode: It is the mode in which change in the quantity monitored should be observed. This can be ‘min’ or ‘max’ or ‘auto’. When the monitored value is loss, its value is ‘min’. When the monitored value is accuracy, its value is ‘max’. When the mode is set is ‘auto’, the function automatically monitors with the suitable mode.

- min_delta: The minimum value should be set for the change to be considered i.e., Change in the value being monitored should be higher than ‘min_delta’ value.

- patience: Patience is the number of epochs for the training to be continued after the first halt. The model waits for patience number of epochs for any improvement in the model.

- verbose: Verbose is an integer value-0, 1 or 2. This value is to select the way in which the progress is displayed while training.

- Verbose = 0: Silent mode-Nothing is displayed in this mode.

- Verbose = 1: A bar depicting the progress of training is displayed.

- Verbose = 2: In this mode, one line per epoch, showing the progress of training per epoch is displayed.

- restore_best_weights: This is a boolean value. True value restores the weights which are optimal.

寻找最佳时期数以避免在 MNIST 数据集上过拟合。

第 1 步:加载数据集和预处理

import keras

from keras.utils.np_utils import to_categorical

from keras.datasets import mnist

# Loading data

(train_images, train_labels), (test_images, test_labels)= mnist.load_data()

# Reshaping data-Adding number of channels as 1 (Grayscale images)

train_images = train_images.reshape((train_images.shape[0],

train_images.shape[1],

train_images.shape[2], 1))

test_images = test_images.reshape((test_images.shape[0],

test_images.shape[1],

test_images.shape[2], 1))

# Scaling down pixel values

train_images = train_images.astype('float32')/255

test_images = test_images.astype('float32')/255

# Encoding labels to a binary class matrix

y_train = to_categorical(train_labels)

y_test = to_categorical(test_labels)

第 2 步:构建 CNN 模型

from keras import models

from keras import layers

model = models.Sequential()

model.add(layers.Conv2D(32, (3, 3), activation ="relu",

input_shape =(28, 28, 1)))

model.add(layers.MaxPooling2D(2, 2))

model.add(layers.Conv2D(64, (3, 3), activation ="relu"))

model.add(layers.MaxPooling2D(2, 2))

model.add(layers.Flatten())

model.add(layers.Dense(64, activation ="relu"))

model.add(layers.Dense(10, activation ="softmax"))

model.summary()

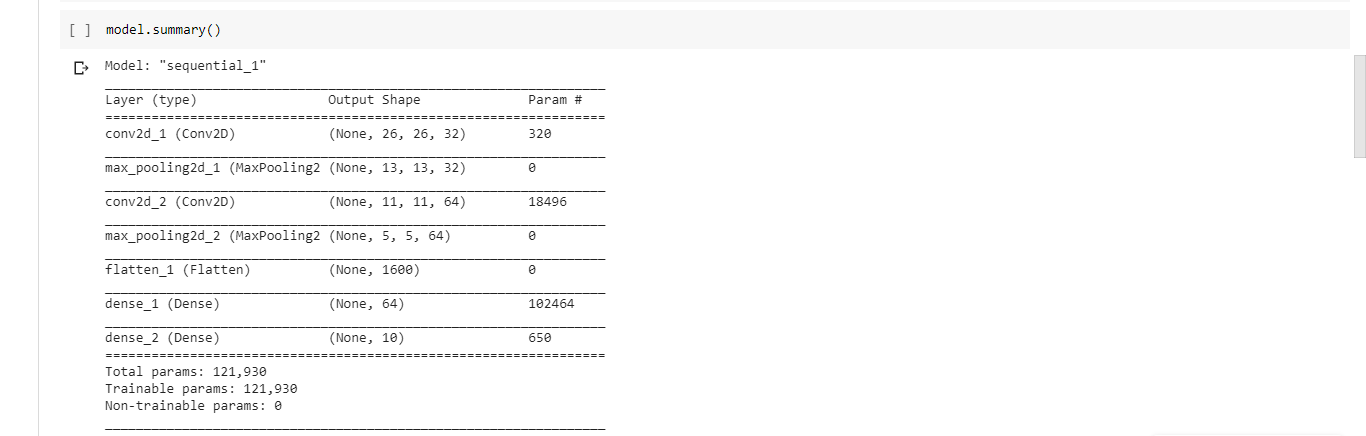

输出:模型摘要

第 4 步:使用 RMSprop 优化器、分类交叉熵损失函数和准确度作为成功指标来编译模型

model.compile(optimizer ="rmsprop", loss ="categorical_crossentropy",

metrics =['accuracy'])

步骤 5:通过划分当前训练集创建验证集和训练集

val_images = train_images[:10000]

partial_images = train_images[10000:]

val_labels = y_train[:10000]

partial_labels = y_train[10000:]

第 6 步:初始化 earlystopping 回调并训练模型

from keras import callbacks

earlystopping = callbacks.EarlyStopping(monitor ="val_loss",

mode ="min", patience = 5,

restore_best_weights = True)

history = model.fit(partial_images, partial_labels, batch_size = 128,

epochs = 25, validation_data =(val_images, val_labels),

callbacks =[earlystopping])

训练在第 11 个 epoch 停止,即模型将从第 12 个 epoch 开始过度拟合。因此,训练大多数数据集的最佳 epoch 数是 11。

在不使用 Early Stopping 回调函数的情况下观察损失值:

将模型训练至 25 个 epoch,并根据 epoch 数绘制训练损失值和验证损失值。情节看起来像:

推理:

随着 epoch 的数量增加到超过 11,训练集损失减少并几乎为零。然而,验证损失会增加,这表明模型对训练数据的过度拟合。

参考:

- https://keras.io/callbacks/

- https://keras.io/datasets/